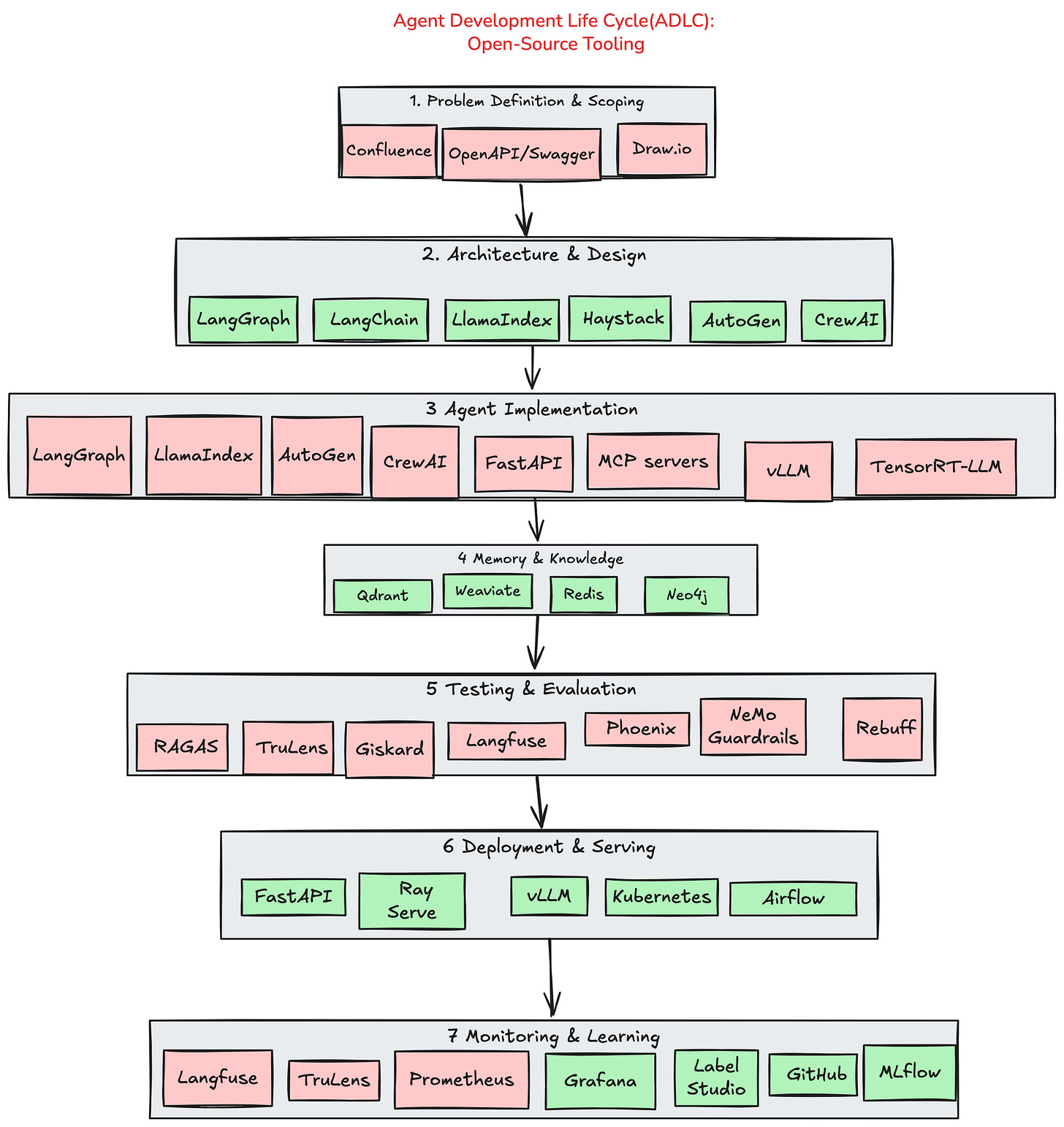

The Open-Source Tooling Stack for the Agent Development Lifecycle (ADLC)

A practical map for building real Agentic AI systems

Modern AI agents are no longer simple ChatGPT wrappers.

They are complex, multi-layered systems that combine reasoning, memory, tools, retrieval, guardrails, orchestration, observability, and continuous learning.

Yet most discussions about agent development focus on a single framework (LangChain, AutoGen, LlamaIndex) instead of the full lifecycle required to take an agent from idea → production → iteration.

That’s why I created the diagram below — a clear, end-to-end map of the open-source ecosystem that powers each stage of the Agent Development Lifecycle (ADLC).

Why the ADLC Matters

Agents fail in production not because of prompts —

but because teams overlook one or more critical lifecycle stages:

No architectural grounding

No vector memory strategy

No guardrails or evaluation

No observability

No feedback loops

No scalable serving layer

ADLC brings structure where today there is chaos.

It ensures that agent development is repeatable, testable, debuggable, and scalable.

The 7 Stages of ADLC (and the Tools That Power Them)

1. Problem Definition & Scoping

Before writing a single prompt, teams define tasks, constraints, APIs, and success metrics.

Tools: Confluence, OpenAPI/Swagger, Draw.io

2. Architecture & Design

Choose agent patterns, orchestration flows, RAG strategy, and tool interfaces.

Tools: LangGraph, LangChain, LlamaIndex, Haystack, AutoGen, CrewAI

3. Agent Implementation

This is where reasoning loops, multi-agent patterns, tool calling, and LLM serving come together.

Tools: FastAPI, MCP servers, vLLM, TensorRT-LLM, LangChain, LlamaIndex, AutoGen, CrewAI

4. Memory & Knowledge Integration

Agents rely on long-term memory, retrieval pipelines, caching, and knowledge graphs.

Tools: Qdrant, Weaviate, Redis, Neo4j

5. Testing & Evaluation

Agents must be validated for correctness, safety, retrieval quality, and robustness.

Tools: RAGAS, TruLens, Giskard, Langfuse, Phoenix, NeMo Guardrails, Rebuff

6. Deployment & Serving

Production-ready agents need scalable serving, batching, GPU efficiency, workflows, and autoscaling.

Tools: Ray Serve, FastAPI, vLLM, Kubernetes, Airflow

7. Monitoring & Continuous Learning

After deployment, everything depends on observability, feedback, and iterative improvement.

Tools: Prometheus, Grafana, Langfuse, TruLens, Label Studio, GitHub, MLflow

Why This Map Is Useful

This diagram provides a structured blueprint for:

Engineering teams building real agentic systems

Architects designing end-to-end AI platforms

Researchers understanding practical constraints

Leaders planning infra investment

Anyone wanting clarity beyond “just use LangChain”

It separates hype from reality.

Real agents are systems — not prompts.

Closing Thoughts

If you’re serious about building agentic AI, start thinking in terms of lifecycles, not frameworks.ADLC gives you the structure; open-source tooling gives you the flexibility.Together, they form the foundation of reliable, scalable, and production-ready agent systems.

If you’d like a deeper dive into any stage of ADLC, let me know — I’m working on a full breakdown series.

If you're experimenting with these multi-agent patterns, you'll need a flexible LLM testing platform. I've been using Novita AI – particularly useful for:

• Testing different LLMs side-by-side for agent specialization

• Evaluating performance without spinning up new infrastructure

• Cost-effective experimentation before production deployment

👉 Affiliate link (supports my research + writing):

https://novita.ai/?ref=ywrkyth&utm_source=affiliate

Full disclosure: This is an affiliate link, but I genuinely use Novita for my own agent testing. Their pay-as-you-go model is perfect for pattern experimentation.